Toronto (AP) – 77 years old, Jeffrey Hinton There is a new calling in life. Like modern prophets, Nobel laureates are warning about uncontrolled and unregulated risks artificial intelligence.

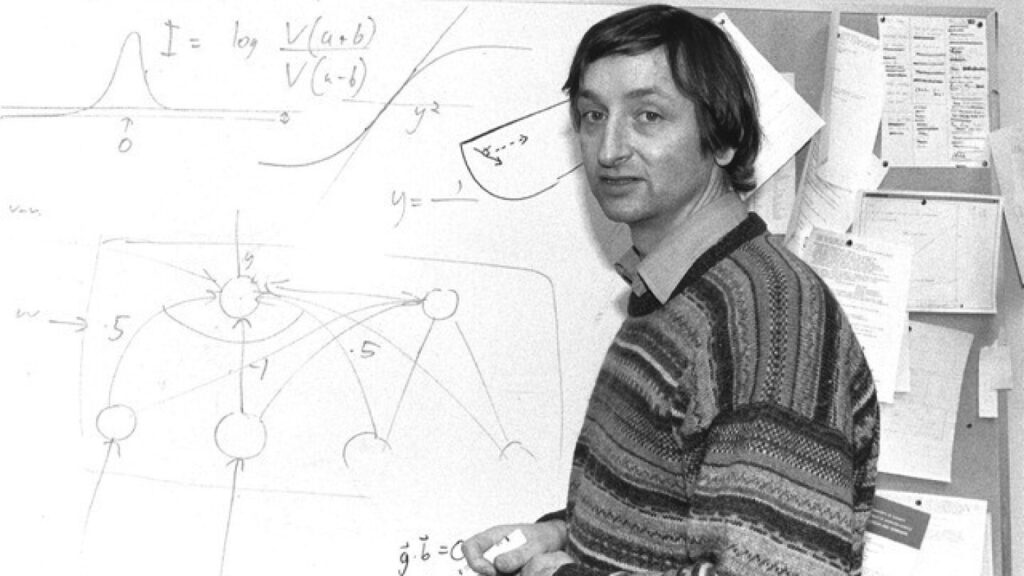

Frequently referred to as the “Godfather of AI,” Hinton is known for his pioneering work on deep learning and neural networks that help lay the foundations of AI technology, which are often used today. Feeling “a bit responsible,” he began talking publicly about his concerns in 2023 after quitting his job at Google.

As technology – and Investment dollars -AI power has been improving in recent years, so there is also the interests behind it.

“It’s really like God,” Hinton said.

Hinton is one of the number of prominent engineers who speak about AI using a language once reserved for God. Openai CEO Sam Altman He calls his company’s technology a “The Magic Intelligence of the Sky” meanwhile Peter Thiel, Co-founders of PayPal and Palantir even claimed that AI could help bring Antichrist.

Does AI bring reproach and salvation?

There are many skeptics who doubt technology, such as Dylan Baker, a former Google employee and leading research engineer at the distributed AI Institute, who studies the harmful effects of AI.

“I think often they work from the magical fantastical thoughts that are informed by a lot of science fiction. “They are really detached from reality.”

Chatbots like ChatGpt have become popular in the times recently, but certain Silicon Valley circles have been prophesing the power of AI for decades.

“We’re trying to wake up people,” Hinton said. “To make the public understand the risks just like politicians pressure politicians to do something about it.”

Researchers like Hinton warn of existential threats that AI believes to pose to humanity, but on the other side of the spectrum are CEOs and theorists who argue that they are approaching a kind of technical apocalypse that marks a new era of human evolution.

in The essay has been published Last year, the title, “Lovely Blessing Machines: How AI Can Change the World for the Better,” revealed the CEO of humanity, Dario Amody, who reveals his vision for the future, “If everything goes right with AI.”

AI entrepreneurs predict “the defeat of most diseases, growth in biological and cognitive freedom, billions of people from poverty share new technologies, and a renaissance of liberal democracy and human rights.”

Amodei chooses the phrase “strong AI,” while others use terms such as “singularity” and “artificial general information (AGI).” Although proponents of these concepts often disagree with how to define them, they broadly refer to hypothetical future points where AI can outweigh human-level intelligence and cause rapid and irreversible changes to society.

Computer scientist and author Ray Kurzweil predicts that since the 1990s, humans will one day merge with technology, a concept called transhumanism.

“We’re not going to tell you what comes from our brains and what comes from AI. It’s all embedded within ourselves, and it’s going to make us more intelligent,” Kurzweil said.

In his latest book, The Singularity Is Career: When Mergering with AI, Kurzweil doubles his previous predictions. He believes that by 2045 we have “increased our own intelligence by a million times.”

“Yes,” he finally admitted when asked if he thought AI was his religion. It informs his sense of purpose.

“My thoughts about the future and future of technology, and how quickly it comes, will affect my attitude towards being here, what I am doing and how I can influence other people,” he said.

The vision of the apocalypse bubbles up

Despite the explicit invocation of language from Thiel’s Apocalypse, the positive vision of the future of AI is more “apocalyptic” in the historical sense of the word.

“In the ancient world, eschatological things are not negative,” explains Domenicogostini, a professor at the University of Naples, Ran Oriental, who studies ancient apocalyptic literature. “It completely changed the semantics of this word.”

The term “apocalypsis” comes from the Greek word “apocalypsis” meaning “apocalypsis.” Although often associated with the end of the world, the apocalypse of ancient Jews and Christian ideas was a source of encouragement in times of hardship and persecution.

“God promises a new world,” said Professor Robert Gerach, who studies religion and technology at Knox College. “To occupy that new world, we must have a glorious new body that will beat the evil we all experience.”

Jelachi first noticed the apocalyptic language used to describe the possibilities of AI in the early 2000s. Kurzweil and other theorists ultimately urged him to write his 2010 book, “Apocalyptic AI: Visions of Heaven of Heaven and Virtual Reality.”

Language reminded him of early Christianity. “Only we are going to slide God into it… perhaps your choice of space science law to do this, and we will have the same kind of glorious future,” he said.

Gerashi argues that this type of language hasn’t changed much since he began studying it. What surprises him is how popular it is.

“There’s always been something that was once very strange,” he said.

Has Silicon Valley finally found that god?

One factor in the growth of AI cults is profitability.

“Twenty years ago, that fantasy, whether true or not, didn’t actually generate a lot of money,” Gerasi said. But now, “Sam Altman has the financial incentive to say that Agi is rounding the corner.”

However, Geraci, who claims that Chatgpt is “not remotely, vaguely, plausible,” believes this phenomenon may be moving more.

Historically, it is also well known that there is no religion in the world of technology. As its secular reputation preceded that, one episode of the satirical HBO comedy series “Silicon Valley” revolves around “going out” his colleagues as a Christian.

Rather than viewing the sceptical technological world of worship as ironic, Gerashi thinks it is causal.

“Our people are deep, deep and inherently religious,” he says, adding that the impressive technology behind AI may appeal to people in Silicon Valley who have already “put aside their usual approach to transcendence and meaning.”

There is no religion without skeptics

Not all Silicon Valley CEOs have converted.

“When people in the tech industry talk about building this true AI, it’s as if they think they’re creating God or something,” Meta said. Mark Zuckerberg I said On a podcast Last year he promoted the company’s. My own venture For AI.

Transhumanist theories like Kurzweil are more widely used, but are not yet anywhere within Silicon Valley.

“The scientific case for that is not stronger than in a religious afterlife,” claims Max Tegmark, a physicist and machine learning researcher. Massachusetts Institute of Technology.

Like Hinton, Tegmark speaks openly about the potential risks of unregulated AI. In 2023, Tegmark helped lead the way as president of The Future of Life Institute. Open Letter We ask a powerful AI lab to “pause immediately” training the system.

The letter has attracted over 33,000 signatures, including Elon Musk and Apple co-founder Steve Wozniak. Tegmark believes the letter was a success because it helped “conversation mainstream” about AI safety, but he believes his work is not finished.

With regulations and safeguards, Tegmark believes that AI can be used as a tool for curing illnesses and increasing human productivity. But he argues it is essential to separate from the “very bordered” race that some companies run.

“There are many stories about how, both in religious texts and, for example, in ancient Greek mythology, how when humans start playing gods, it ends badly,” he said. “And I feel like I have a lot of hub arrogance in San Francisco right now.”

___

Associated Press Religious Reporting is supported through the Associated Press collaboration With funding from Lilly Endowment Inc., the AP is in a conversation by taking sole responsibility for this content.